LLMs: We're Still Asking the Wrong Question

Three years into the LLM era, the debate is still 'is AI smarter than humans?' That's the wrong frame. The right one is harder — and more consequential.

IQ tests were designed to measure one specific thing: how a human mind performs relative to other human minds. They are normed on human populations, calibrated for human cognition, and validated against human outcomes. Applying them to a large language model is a category error — like timing a fish in a footrace and declaring it slow.

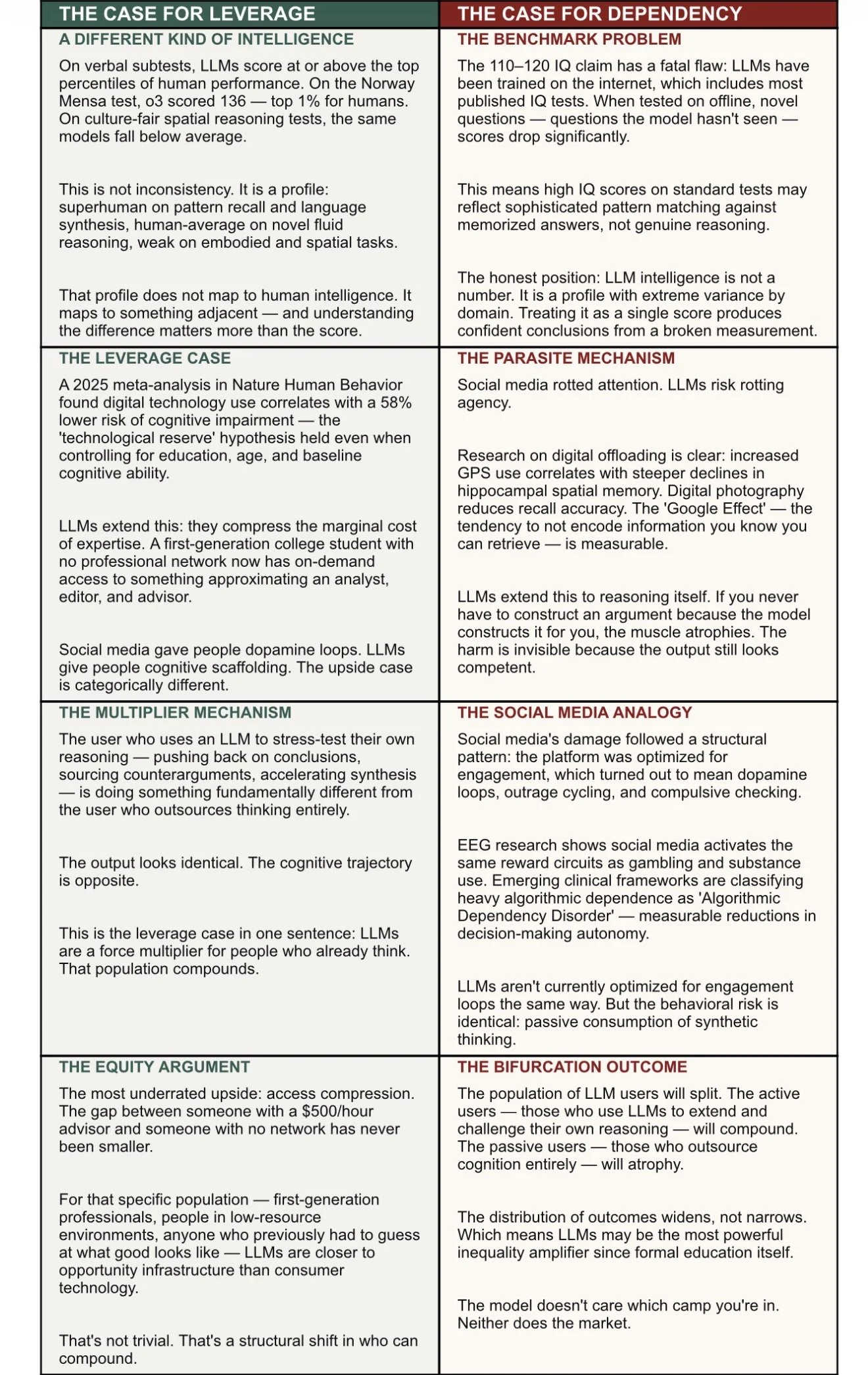

And yet the question persists, because it's pointing at something real. LLMs are clearly capable of things that previously required significant human intelligence — drafting complex arguments, synthesizing large bodies of research, passing professional licensing exams. The instinct to benchmark that capability against a human standard isn't wrong. The benchmark itself is. The more useful question isn't 'how smart is it?' It's 'what kind of intelligence, and what does it do to yours?'

THE SGGI VIEW

This isn't a debate about whether LLMs are good or bad. It's a structural observation: the same technology will compound some users and hollow out others. The determining variable isn't the model. It's whether the human stays in the loop.

Social media was optimized by its designers to maximize engagement — which meant dependency by design. LLMs aren't currently built that way. The parasite risk is emergent and behavioral, not baked into the incentive structure. That may change.

The same question was asked about social media in 2010. We got it wrong. We're probably going to get it wrong again — just in the opposite direction for different people.